Como parsear un dataset de Twitter en JSON y no morir en el intentoHow to parse a JSON Twitter dataset and and not die trying

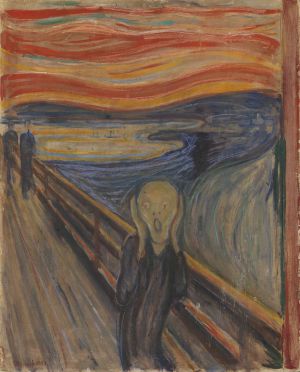

En Junio de 2013 mi paciencia fue puesta a prueba cuando un fallo disco en un servidor de la universidad se llevó por delante parte de mis de mis preciados datos. Como las desgracias no vienen solas, el servidor de back-up que tenía en casa dejo de funcionar casi al mismo tiempo sin posibilidad de recuperar la información perdida. Mi reacción no fue como la del santo Job (Twitter me los dio, el disco se los llevó, alabada sea la tecnología) porque la paciencia no es virtud que me adorne. Primero me dio por llorar (ventaja que tenemos las mujeres para desahogarnos) hasta que una vez que agoté la parte emocional pasé a la racional para buscar una solución. Uno de los datasets perdidos fue el de Eurovisión-2013 que me dolía doblemente porque era para una colaboración con un grupo de investigación de la Universidad Complutense. Para no dejar colgada esta investigación, recurrí a otros investigadores que también habían recogido los datos de Eurovisión y que generosamente me los enviaron en formato JSON. Lo que parecía algo tan trivial como “pasear” el JSON para dejarlo en formato CSV me ha tenido todo el día de cabeza. Me he ido encontrando con varias “piedrecitas” por el camino:

En Junio de 2013 mi paciencia fue puesta a prueba cuando un fallo disco en un servidor de la universidad se llevó por delante parte de mis de mis preciados datos. Como las desgracias no vienen solas, el servidor de back-up que tenía en casa dejo de funcionar casi al mismo tiempo sin posibilidad de recuperar la información perdida. Mi reacción no fue como la del santo Job (Twitter me los dio, el disco se los llevó, alabada sea la tecnología) porque la paciencia no es virtud que me adorne. Primero me dio por llorar (ventaja que tenemos las mujeres para desahogarnos) hasta que una vez que agoté la parte emocional pasé a la racional para buscar una solución. Uno de los datasets perdidos fue el de Eurovisión-2013 que me dolía doblemente porque era para una colaboración con un grupo de investigación de la Universidad Complutense. Para no dejar colgada esta investigación, recurrí a otros investigadores que también habían recogido los datos de Eurovisión y que generosamente me los enviaron en formato JSON. Lo que parecía algo tan trivial como “pasear” el JSON para dejarlo en formato CSV me ha tenido todo el día de cabeza. Me he ido encontrando con varias “piedrecitas” por el camino:

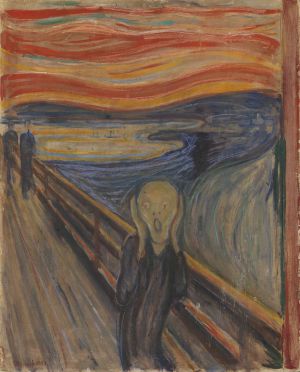

Después de hacer un tratamiento exhaustivo de excepciones he conseguido convertir el JSON a CSV y podré dar una sorpresa al grupo de investigación de la complutense.

In June of 2013 my patience was tested when I lost some datasets due to a disk failure of a server at university. As misfortunes do not come alone, the back-up server at home crashed at about the same time with no possibility of recovering this information. My reaction was not like Job´s (Twitter gave, and the disk has taken away; blessed be the name of technology) because patience is not a virtue that I own. First I cried (a female advantage to let off steam) until my emotional side was calmed and then my rational part started to work finding a solution. One of the datasets lost was the Eurovision-2013. It hurt me twice as much because it was a collaboration with a research team at the Complutense University. To enable this research, I asked other researchers who had also collected data from Twitter of Eurovision and generously they sent me the dataset in the JSON format. It seemed something as trivial as to parse from the JSON to CSV format, but I spent a day doing it, because I found several «stones» along the way:

In June of 2013 my patience was tested when I lost some datasets due to a disk failure of a server at university. As misfortunes do not come alone, the back-up server at home crashed at about the same time with no possibility of recovering this information. My reaction was not like Job´s (Twitter gave, and the disk has taken away; blessed be the name of technology) because patience is not a virtue that I own. First I cried (a female advantage to let off steam) until my emotional side was calmed and then my rational part started to work finding a solution. One of the datasets lost was the Eurovision-2013. It hurt me twice as much because it was a collaboration with a research team at the Complutense University. To enable this research, I asked other researchers who had also collected data from Twitter of Eurovision and generously they sent me the dataset in the JSON format. It seemed something as trivial as to parse from the JSON to CSV format, but I spent a day doing it, because I found several «stones» along the way:

After doing an exhaustive treatment of exceptions I got to convert JSON to CSV and now I can surprise the research team at the Complutense University.